There are a lot of terms in the internet marketing, website structuring and SEO industry; some you may be familiar with, while others may have you scratching your head. Below you will find an informational resource providing the definitions that we put together for both our clients and visitors in order to provide a better understanding of what an industry term means when you see or hear it. This list may evolve over time as new methods, updates and monikers are born – and provide some great insight into some highly technical words that aren’t a part of most people’s everyday language.

What is the Definition of…

301 Redirect

Anchor Text

Authority Site

Backlinks

Blackhat SEO

Bounce Rate

Cloaking

Crawling

Deindexed

Dofollow

Duplicate Content

Footprint

Google Algorithm Update

Google Panda Update

Google Penguin Update

Header Titles

Keyword Density

Keyword Stuffing

Landing Page

Link Authority

Linkbait

Link Diversity

Link Farm

Link Relevancy

The term 301 redirect refers to a URL redirection or URL forwarding as it is also commonly called. This technique allows a web page to be accessible under another entirely different address. 301 redirection also allows for domain redirection/forwarding which is used to retain ownership of similar Top Level Domains (TLD). As an example if you type in webdigia.org into your browser, it will redirect you to our main website which is webdigia.com. The 301 technique is also ideal for preventing broken links or shortening URLS when web pages or websites are moved or redesigned. In some cases it is exploited and used for phishing purposes where one hyperlink clicked that appears to be about one thing, actually redirects to something completely different; such as an offer, sales or landing page.

Also commonly referred to as link text, or link title; anchor text is the text that you can click on in an active hyperlink. The words chosen for the anchor text are important for relevancy in SEO/backlinks and are a factor for the ranking that a website or webpage will receive. Search engines weight anchor text highly because the text within the hyperlink is commonly very relevant to the page it directs to. As an example, the link at the end of this sentence directs to our homepage with the anchor text of “Webdigia” which would be directly relevant to the following clickable hyperlink: Webdigia.

Also commonly referred to as link text, or link title; anchor text is the text that you can click on in an active hyperlink. The words chosen for the anchor text are important for relevancy in SEO/backlinks and are a factor for the ranking that a website or webpage will receive. Search engines weight anchor text highly because the text within the hyperlink is commonly very relevant to the page it directs to. As an example, the link at the end of this sentence directs to our homepage with the anchor text of “Webdigia” which would be directly relevant to the following clickable hyperlink: Webdigia.

The term authority site refers to a website that is aiming to be the go to source or “authority” on the topic that it covers. An authority website is also linked to having trust from major search engines like Google, and receives quick indexing and high ranking because of its authority. Every website that is created and marketed should be setup with the intention to be an authority site. The site is built with quality in mind and to provide the visitor value and is not only setup for earning off of advertisements.

All of the links that are incoming to a website or web page are referred to as backlinks. Backlinks also may be referred to as inbound links or incoming links however ‘backlinks’ is the most common term. There are many different forms of backlinks regarding quality, anchor text, text link, or image link and more. Backlinks are a large part of search engine optimization in today’s web and each one acts as a vote towards a website or web pages authority which also impacts ranking.

Originally termed spamdexing, blackhat SEO is the deliberate and aggressive exploiting and manipulation of search engine indexing and ranking. These tactics usually don’t abide by search engine policies. There are many different methods, both past and present, including cloaking, keyword stuffing, and the use of programs that create automated and often low quality and spammy backlinks and content. Search engine algorithms constantly evolve to detect blackhat SEO techniques and will flag and penalize websites that have a large blackhat SEO footprint. This type of SEO often results in the removal or deindexing/sandboxing from search engines and is not viable for longevity; or recommended.

The term bounce rate is a piece of information derived from the analysis of website traffic. Used by internet marketers and website owners, it is displayed as a percentage. The percentage is determined from the visitors who enter a website and then leave (bounce) the site without ever traveling to other pages. A bounce may occur from a visitor clicking an advertisement, closing their browser, traveling through a hyperlink to another linked website, or clicking the back button. Bounce rate is one metric that is used by search engine algorithms to determine relevancy and ranking and can be monitored and viewed using visitor tracking software. A lower bounce rate is better because it often means that the website retains visitors, keeping their interest, which is often associated with providing value. Bounce rate can also be used to analyze and optimize a landing page or other call to action. Typically, a bounce rate of around 50% is average, however 60% and over is reason to be concerned.

An SEO technique that is black hat, and is often used to deceive search engines and their site crawling spiders. How it works is the content that is shown to the search engine is actually different than what is loaded when an actual user visits the website. A script located on the server will load different versions depending on whether it detects a search engine spider or, an IP address or a user-agent HTTP header request. This is primarily done to try and trick search engines into indexing and providing a higher ranking to a site that appears to be relevant.

Also known as spidering, crawling is done by web crawlers which are computer programs that travel the internet reading certain sets of data. Web crawlers are often referred to as spiders, bots, ants, or robots and this process is primarily useful by the major search engines for indexation, and ranking of websites based on preset data according to their algorithms. Crawling may also be done by those who perform spam tactics. Spiders can gather email addresses off of websites and compile them into a list.

The term deindexed in the SEO community refers to the removal of a website from the search engine index. When a website engages in black hat or spammy SEO practices, is infected by viruses, and other negative properties, a search engine algorithm may or may not alert a manual review where a website or group of linked websites (ie: link farm) are blacklisted from being included in the search results index. When a website has links built to it too quickly or has a lot of low quality links too fast, search engine marketers often call this type of deindex being “sandboxed.”

The term dofollow is the exact opposite of the nofollow value of an HTML link; however the dofollow value actually doesn’t exist. The lack of an attribute of nofollow therefore is referred to as a dofollow hyperlink. When there is an absence of a nofollow value, search engines will recognize the hyperlink; positively affecting search engine ranking and PageRank.

Duplicate content is when an article or entire website is a direct copy from another website. When an article, sales copy, blog post, or other content gets placed on the web it is only fair that that content be owned and attributed to the original author. Unique content has grown to be a primary element of a successful SEO campaign as well as creating more indexed pages and authority for a website. Duplicate content can negatively affect your SEO rankings or cause your website or web page to be completely deindexed.

When you walk through the mud or step in paint, you leave behind a footprint or multiple footprints. The same goes for SEM/SEO. When you create backlinks you will leave a footprint that a search engine algorithm will analyze to understand whether it appears to be natural or not. A backlink profile and strategy must be as natural as possible. If all of your backlinks are created from the same IP address and on websites from the same hosting provider or website owners, it may raise some red flags that your backlinks and ranking are being manipulated in a spammy way. The end result of an unnatural footprint could be a penalty, loss of rankings, or devaluation of the backlinks altogether.

Google implements significant changes to their search engine results ranking algorithm known as updates. Each change aligns with their goal to remove as much spam and blackhat SEO tactics which ‘game’ the search engine results through link manipulation. With each new change, Google intends to offer the end user (searcher) with the most relevant search results possible, increasing the user experience for their customers. There are no timeframes for when an update may be launched, and there is little known about the inner workings of the highly protected search engine algorithms other than what SEO experts do in a series of split tests and current SEO trends.

The Google Panda Update was launched back in February of 2011 and was implemented as a direct attack on low quality websites. Panda sought to lower the rankings of thin sites; or sites that lacked much real value (lots of advertisements), indexed pages, and content. Google did provide webmasters with a series of twenty-three points to help any affected sites recover and how to abide by the Panda update and receive better search engine ranking. The update was said to have affected around 12% of all search results and many smaller tweaks have been implemented since then to work out any ranking or bugs that the algorithm initially caused.

The Google Penguin Update was released on April 24, 2012 and was a follow-up to the Google Panda Update. Penguin was an update to the search engine ranking algorithm which sought to decrease the rankings of websites that were using blackhat SEO techniques such as keyword stuffing, cloaking, over optimizations of anchor text backlinks, engaging in paid link schemes and networks, intentional duplicate content, and more. These are considered to be direct violations of Google’s policies and affected somewhere around 3% of all search engine results.

The term header titles or tag relates to the use of the H1 through H5 header html elements. For SEO purposes the H1 through H3 elements are the most important for optimization, with the H4 growing in importance as well. Header titles are another metric that tells search engines what the page is about by importance. Do not use header tags as a way to use larger or bold text; this is not proper use of the tags.

This term refers to the number of times, or percentage that a keyword is used on a web page in relation to the total amount of words on that same page. Keyword density is one factor that search engines have used to determine relevancy for keyword phrases. When relevancy is higher, rankings will also be higher. Today it is less weighted because of the ability to exploit the method, but it is still a very important factor of onpage SEO and ranking. The industry standard is between 1-3 percent and it can be calculated by dividing how many times the keyword is repeated by the total words on the page and then multiplying the result by 100.

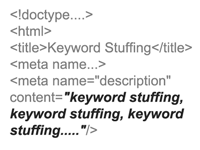

A method that once worked to inflate keyword rankings, however it is black hat in nature and is outdated and no longer provides any value and can actually harm your rankings. Keyword stuffing is repeating valuable keywords many times in meta information and in content that is unnatural and either visible or invisible to the visitor. The intention of this practice is to inflate rankings for each keyword and bring in traffic. Some black hat formatting examples include making text the same color as the background in order to make it appear invisible, or using CSS to “Z” position the text behind a graphic on the page. Search engines can now detect all of these.

Also known as a lead capture page, it is used for internet marketing. A landing page is a web page that is commonly used for Pay-Per Click (PPC) marketing and other advertisements or lead gen. It is a tool to effectively capture leads and usually contains sales copy, testimonials, persuasion, and clear call to actions. Landing pages are monitored by internet marketers who can determine an ad campaigns success rate by looking at bounce rates and conversion rates. The landing page will often entice a visitor and withhold just enough information until the visitor completes a submission form, purchases a product or service, or other action. Many different changes during a campaign may be made to a landing page (A/B testing) in order to optimize for the best conversion rate possible.

The quality of a backlink is extremely important when it comes to building backlinks. You can’t necessarily review and moderate who links to your website organically, but you can do so when undergoing an SEO campaign. Link authority relates to the quality of the link. Some of the biggest factors when considering link authority are, the main domain authority level, whether or not it’s a dofollow link, contextual link inside content, the pagerank of the page your link is created on, how old and trusted the website is where your link exists, whether it’s considered a spammy link, is it a 301 redirection or rel canonical, and much more. A proper link profile will consist of varying link authorities from high quality to average to low quality, but never spammy.

The term linkbait is any form of content or call to action that is used to generate viral attention and encourage others to link to the website or web page. Linkbait may be in the form of a video, a giveaway, audio clip or some text or picture that may not be found anywhere else on the web. From an internet marketing perspective it is an ethical and powerful way to get people’s attention and potentially create a lot organic attention and backlinks in the process.

Search engine algorithms and quality checks have evolved over time to make sure that guidelines against spam and other link building are adhered to. Link diversity relates to a “diverse” backlink profile that does not consist of just one type of link. If all of your backlinks are from blog comments, this could raise some red flags that your link building efforts are being done in a way that does not appear to be natural. Proper link building and SEO involves link diversity, and acquiring backlinks from many different sources both organically and manually. A good link diversity profile example could consist of backlinks from guest posts, press releases, forums, videos, social networks, infographics and other images, web 2.0 properties, and being linked to naturally as an authority source.

Once a very popular method for increasing high quality backlinks, link farms are a group of websites that all link to each other. Link farms are considered a spamdexing technique and are often created using automated programs and provide little to no benefit to the users of a search engine. Google’s algorithm now has quality checks in place to identify attributes such as every website linking to each other in order to discredit the links or deindex the entire website(s).

Something that has grown increasingly important is the element of link relevancy. In the past a link was weighted fairly uniformly even if that link was not on a relevant website or surrounded by relevant text. Today, link relevancy is crucial to link authority. The origin of your backlink should be on a relevant page and in a relevant context to your website, where you are ideally being linked to as the authority or ‘source’ (accreditation) of that information or anchor text.

This term describes the speed (velocity) at which you build backlinks to your website(s). It is important to remember that quality is greater than quantity and that having a solid long term approach to your backlinking portfolio will always remain supreme versus becoming stagnant. Building 5 high quality backlinks per day versus 2,000 poor quality backlinks per month and then having them disappear or negatively impact your site is one example of a proper long term approach.

The term link juice is the power and quality of a websites backlink footprint or profile. Link juice is the number of backlinks and their quality on such factors as page rank. The more powerful the backlink, the more link juice it has to pass on to your site.

One of the most overlooked phrases and underutilized terms in SEO is long tail keywords. Long tail keywords relate to your main focus but they are longer than your main keywords and can bring in more traffic. Often times long tails (for short) convert better as well. For example, if your main keyword you want to rank for is “kids hats,” a long tail keyword could be “buy kids hats,” or “blue kids hats.” These types of keywords not only add additional streams of traffic, but they also tend to be far more targeted visitors too.

Meta is short for metadata. Metadata refers to data about data and is linked to web pages, videos, images, and more. In an image, metadata may include ownership information, copyright, and even keywords about the photo embedded into the digital file. Relating to a website or webpage metadata for SEO purposes is linked to meta tags/meta elements. Meta tags or elements are used to provide (metadata) descriptions and keyword inclusion in order to help search engines parse these attributes and index and rank a website according to its relevancy.

HTML links can contain relation attributes. A Nofollow value informs search engine spiders that the hyperlink should not affect the website its link to – search engine ranking or PageRank. This is an effective value at helping to reduce the amount of black hat SEO and spam that is present on the internet. The hyperlink will still function like normal and be clickable to the destination, however it has been said by Google’s Matt Cutts that it will not affect search engine ranking placement. It is proper however and natural to have a healthy mix of both nofollow and dofollow backlinks in a websites backlink profile.

Named after Larry Page, PageRank is a numerical range from 0-10 that is calculated through Google’s algorithm. PageRank updates approximately every three months however there is no definitive timeline and sometimes it has gone eight months without a new update. The more high PageRank sites that link to your website, the higher your PageRank will be. These backlinks act as votes to your website. PageRank is one metric of how Google measures the trust and authority of your website and PageRank is also one of hundreds of factors that come into the equation for search engine optimization. Want to learn more about PageRank and find out what your websites PR is? Visit our in depth blog post on what is Google PageRank.

The title of a website or web page is an important factor for relevancy of a website. When an end user submits a search query into a search engine, the titles also appear in these results. A title, in order to grab a reader’s attention, needs to be optimized both for the search engine as well as the searcher. When possible, a title should be limited to 160 characters or less.

The term permalinks is short for permanent links. All links were permanent in the past with static pages, however the web has evolved into more and more dynamic websites that are ever changing. This is the portion of the URL after the ending TLD (.com .net .org …). Permalinks should be optimized for the ability to be readable by a reader as well as optimized for search engine marketing. Most blogging platforms and content management systems such as WordPress are not properly optimized by default.

This term refers to a canonical link element which was first introduced in 2009 and was devised to prevent duplicate content issues by providing the most authority to the original source. A canonical element is placed in thesection of a websites page but it is only a recommendation still and the web crawler will make the final determination. Rel canonical allows a redirection of authority without redirecting the user to another page. If a web page contains a rel canonical to another website, that page is still visible on the domain it is on, but all authority passes to the website listed in the rel canonical as the source.

The robot.txt or robots.txt protocol is a file which may prevent web spiders/robots from analyzing or viewing certain parts of a website which are still viewable by a human visitor to the website. A robot.txt file should be placed in the top level directory and is also used in unison with a sitemap. With a robot.txt file a webmaster can dictate instructions to web spiders or robots on what content should be ignored, or not indexed to help remove certain content that is not entirely relevant to the overall theme of the website for SEO purposes.

The term SEM stands for search engine marketing. SEM is a form of the broader term internet marketing and is (an umbrella term) closely associated with SEO, or search engine optimization. Search engine marketing is the process of onsite and offsite optimization to increase the rank or visibility of a website in the search engine results.

SEO/Search Engine Optimization

Most of the terms on this page stem from the main topic and focus of internet marketing, which is search engine optimization or SEO for short. SEO is constantly evolving and what worked six months ago, may no longer be a viable option as the search engine algorithms quality checks are constantly being tweaked to provide the best search results possible for their end user. SEO is a process of increasing a websites position in a search engine for keywords which relate to that websites topic of focus, product(s), or service(s). There is both onpage SEO and offpage SEO and there are hundreds of metrics that come into play including age of a website, the pagerank, number of incoming backlinks, quality of those backlinks, content, meta info, header markup, bolding, and much more. The goal of SEO is to create or increase the amount of natural organic traffic from searchers of the major search engines like Google, Yahoo! and Bing.

SERP stands for the Search Engine Results Page and is most often used as the plural form. When an end user submits a search query using a string of words (referred to as a keyword) into a search engine, it returns a listing of the top 10 websites for that search term as well as other results on the page. When you see the initial search query results, you are sitting on page one of the SERPS. The SERPS contain both organic listings, map listings, and paid listings (sponsored links -PPC). The search engine results page will show listings including descriptions, titles and links for each result and according to our search query; words may be bolded to show relevance to your input.

Siloing is a method of structuring a website and it substantially increases the overall theme, authority and relevancy. This is an advanced form of search engine optimization and is recommended as the base of a campaign. As an example, a silo is much like creating a new folder on your desktop and naming it birthday pictures. Inside the folder you may have several birthday pictures or you may take it a step further and silo the folder with deeper layers such as many other folders within the birthday folder. Some of these may be titled, birthday vacation, daughters 14th birthday and so on. Another example would be the chapters of a book. This method has high SEO authority because search engines rank websites based on relevancy and authority surrounding targeted keywords. They analyze the content on a page and also take into consideration the rest of the website and its structure of relevant content. There needs to be a clear and relevant site outline including the title, the table of contents, the overall theme, and then each sub page off of that theme. This is the foundation of a proper SEM strategy.

The term sitemap is a list of websites pages that are both visited by crawlers and/or end users. XML sitemaps are a document that spiders crawl and parse. Sitemaps are essential for SEO because they give instructions and tell search engines which pages are there and improve the odds that they are crawled and indexed. A Robots Text File (robot.txt) is required to instruct the crawlers to the sitemap, or you may upload a sitemap to Google Webmaster Tools for example. Including a sitemap is especially important on Flash websites, because it is void of content. All of the major search engines support the use of sitemaps. Furthermore, XML sitemaps have replaced manual website submission to each search engine.

The way a website is setup describes the term site structure. Site structure is important for both how a visitor will use a website, as well as ensuring that a website is understood correctly by web and search engine spiders regarding proper optimization for search engine marketing. One site structure method that is both user friendly and search engine optimized is known as siloing.

As you may know content in king online and is often the highest priority for getting organic backlinks, sharing, and creating new backlinks via guest posts and other methods. With increasing content needs, shortcuts were created. Spun content is generated most often by programs such as “The Best Spinner,” however free versions exist online. An article may be written or scraped from the internet, and then it is input and ‘spun’ at the word or sentence level in order to make a unique article based off the first one in very little time. Spun content however is often low quality, poorly formatted and difficult to read; and not natural.

{this|is|spin|syntax} – When the backlink program process the spin syntax it will spit out 1 of those words from that group.

Example of spun content:

Spin Syntax – “This {is|article is|post is|page is} a {great|awesome|excellent} SEO resources from {Webdigia|Webdigia.com}”

Would generate – “This article is a great SEO resource from Webdigia”

When you see the abbreviation TLD it is referring to a domain that is among the highest hierarchy of the domain naming system. For example, in our domain name: webdigia.com, the TLD is the “.com” portion. The ICANN organization is the entity which is responsible for the management of the top level domains.

This term may be self explanatory; user experience refers to an end user (searcher, visitor to your website, etc.) who interacts with your content. Their experience or impression and how streamlined it is will affect your conversion rates. A better user experience will in most cases improve your visitors’ average time on page, sales, and signups. A user experience is often comprised of the functionality of a website, its structure, navigation and ability to give the visitor everything they need without much frustration.

Whitehat SEO is the exact opposite of blackhat SEO and involves ethical and non spammy search engine optimization techniques. These tactics are done with the intent of providing value and focusing on the audience rather than creating content and backlinks solely for search engines and ranking. Whitehat SEO follows search engine policies as close as possible and is focused on the long term rewards that SEO can provide. Whitehat SEO usually requires substantially more time and resources but it is all about longevity and the reward of stable and ethical SEO that won’t result in being deindexed and penalized.